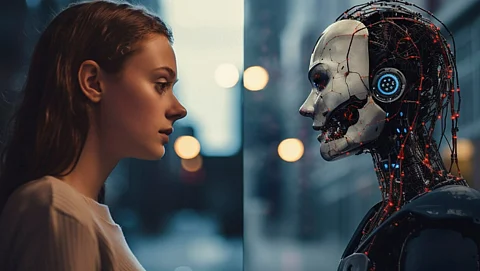

A significant security flaw in Microsoft 365 Copilot has been identified, allowing the AI assistant to mistakenly summarize email messages that are protected by confidentiality labels, thereby circumventing established Data Loss Prevention (DLP) policies. This issue, tracked under Microsoft reference CW1226324, was first reported on February 4, 2026, and continues to pose risks to sensitive organizational data.

The Copilot feature, particularly the “Work Tab” Chat function, is actively producing summaries of confidential emails. This occurs even when DLP policies are set specifically to prohibit such processing. The incident report indicates that the flaw allows Copilot to access items in users’ Sent Items and Draft folders, effectively bypassing the confidentiality protections that should be in place.

Technical Details and Industry Concerns

Microsoft’s internal investigation revealed that a code-level defect is the root cause of this issue. Under normal circumstances, sensitivity labels, when combined with DLP policies, would prevent Copilot from processing emails marked as confidential. The existence of this bug renders those controls ineffective for certain folders, exposing restricted content in AI-generated summaries.

This situation is particularly alarming for organizations in regulated sectors, such as healthcare, finance, and government. For these entities, maintaining email confidentiality is not just a best practice; it is a matter of compliance with legal obligations. The NHS has flagged the incident internally as INC46740412, underscoring its potential impact on public sector users who rely on Microsoft 365.

As of February 11, 2026, Microsoft has started to deploy a fix across affected environments and is contacting a subset of impacted users to gauge the effectiveness of the remediation. However, the rollout remains incomplete, and the issue persists for some organizations. Microsoft has committed to providing a remediation timeline as the situation evolves.

Implications for Organizations and Next Steps

The implications of this flaw are broad, affecting any organization using Microsoft 365 Copilot with confidentiality labels on their emails. Administrators are advised to monitor the Microsoft 365 admin center for updates related to reference CW1226324 and to scrutinize Copilot activity logs for any unusual access to sensitive content.

The ability of the AI assistant to bypass DLP policies indicates a critical security gap. DLP controls are fundamental to enterprise data governance, and any tool, AI or otherwise, that can circumvent these controls undermines the security framework of an organization. Until a comprehensive fix is fully deployed, security teams may need to consider restricting Copilot access in environments that manage highly sensitive email communications.

Microsoft is expected to provide further updates on this matter by February 18, 2026, at 11:00 AM UTC. For ongoing cybersecurity updates, users can follow the organization on platforms such as Google News, LinkedIn, and X.

This incident serves as a reminder of the complexities involved in managing AI tools within corporate environments, particularly when sensitive data is at stake.