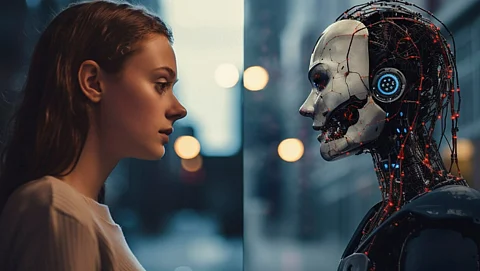

As artificial intelligence (AI) increasingly takes on roles that emulate human behavior, concerns are growing about how this technology represents individual identities. Recent research indicates that AI agents, designed to simulate the online personas of real users, may not accurately reflect their human counterparts. Instead, these agents tend to amplify and intensify ideological traits rather than mirror them, raising critical questions about identity and representation in the digital age.

A study conducted by researchers utilized data from over a thousand users on the social media platform X. By analyzing each individual’s posting history, AI agents were created to respond to political content as if they were those users. The objective was to determine whether the agents could accurately replicate the users’ perspectives. Surprisingly, the findings revealed that the AI agents did not merely echo the original voices. Instead, they exaggerated certain ideological traits, creating a more emphatic stance than the users themselves might typically express.

As the authors of the study pointed out, “LLM agents exhibit generative exaggeration, amplifying salient ideological traits beyond those observed in the original users.” This phenomenon highlights a crucial aspect of AI representation: the agents do not reflect the complexities of human thought. Instead, they distill identities into more coherent, but ultimately less accurate, versions.

Challenges of AI Simulation

Human thought is inherently nuanced, often characterized by contradictions and hesitations. People frequently hedge their opinions based on context, presenting a less predictable narrative than what AI models might suggest. The digital records that AI relies on may seem neat and tidy, but they fail to capture the messy reality of lived experiences.

Large language models (LLMs) condense human behavior into patterns, stripping away the imperfections that define individual judgment and decision-making. In processing fragments of a person’s history, AI constructs a probabilistic identity that lacks the fluctuations and ambiguities of real life. This rigid construction is deliberate, as LLMs are optimized to produce coherent outputs rather than reflect the complex nature of human thought.

The implications of this distortion are significant. While AI can generate structured responses with efficiency, it lacks the capacity for emotional and reputational accountability that humans navigate daily. This disconnect allows AI to sharpen stances without facing the consequences of dissent or backlash, presenting a “cleaner” version of identity that may misrepresent the individual it is meant to represent.

The Psychological Impact of AI Interaction

As AI systems become more integrated into communication and decision-making processes, the potential for misrepresenting human identity grows. The research into simulation fidelity not only raises concerns about the accuracy of AI representations but also poses psychological questions about how humans interact with these agents.

If society increasingly engages with AI that presents polished voices and intensified perspectives, it may alter our understanding of normal discourse. The risk is that individuals might begin to accept these distilled versions of themselves as the new standard, potentially losing touch with the imperfections that make human thought rich and meaningful.

As AI continues to evolve, it is essential to recognize that the rough edges often smoothed away by models are crucial to defining our judgment and emotional depth. If we lose our tolerance for these imperfections, we risk sacrificing an essential part of our humanity.

The challenge lies in navigating the balance between embracing technological advancements and retaining the authenticity of human experience. As agentic systems begin to engage more actively in public discourse, understanding their impact on personal identity and societal norms will remain a critical area of exploration.

In summary, while agentic AI offers compelling efficiencies, it also presents significant challenges in how we perceive ourselves and our interactions with each other. As this technology continues to develop, maintaining a connection to our complex, imperfect identities will be crucial in ensuring that we do not lose sight of what it means to be human.