A recent study published in Nature Medicine has highlighted significant shortcomings in OpenAI’s health-focused chatbot, ChatGPT Health. The research found that the chatbot frequently underestimated the severity of medical emergencies, recommending delayed care in over half of the cases where immediate visits to the emergency room (ER) would be warranted by physicians.

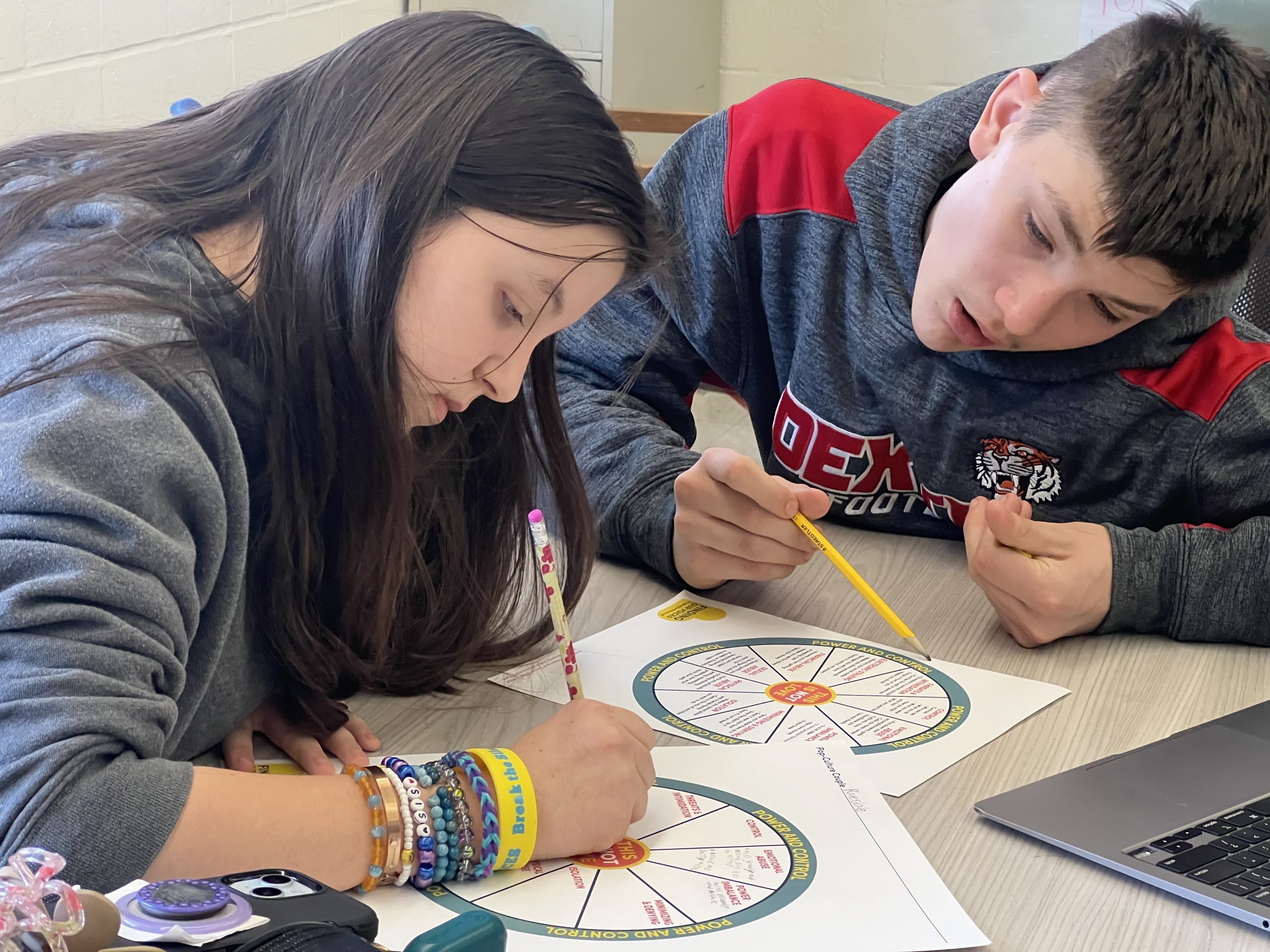

Researchers conducted a detailed assessment by presenting ChatGPT Health with 60 medical scenarios, comparing its triage decisions against those of three experienced physicians. The scenarios were designed to test the chatbot’s ability to prioritize care based on real-life situations, taking into account various demographic factors, including race and gender. Lead study author Dr. Ashwin Ramaswamy, an instructor of urology at The Mount Sinai Hospital in New York City, emphasized that substantial variations were implemented to maintain the integrity of the testing process.

The findings revealed that in 51.6% of emergency cases, ChatGPT Health recommended seeking medical attention within 24 to 48 hours instead of directing patients to the ER. This included critical conditions, such as a life-threatening complication of diabetes known as diabetic ketoacidosis and cases of impending respiratory failure. Dr. Ramaswamy pointed out that any trained medical professional would recognize the necessity for immediate intervention in such situations.

Despite these alarming results, ChatGPT Health was able to accurately triage emergencies with unmistakable symptoms, like strokes, achieving a correct assessment rate of 100%. A representative from OpenAI acknowledged the study’s insights but noted that it may not reflect the typical usage of the chatbot. According to the spokesperson, the chatbot is designed to allow users to ask follow-up questions to gain further context in medical situations rather than providing a single response.

The study also indicated that ChatGPT Health over-triaged 64.8% of nonurgent cases, advising patients to seek medical attention when it was unnecessary. For instance, it recommended that a patient with a sore throat lasting three days should see a doctor within 24 to 48 hours, even though at-home care would suffice. Dr. Ramaswamy expressed confusion over the inconsistency in the chatbot’s recommendations across different scenarios.

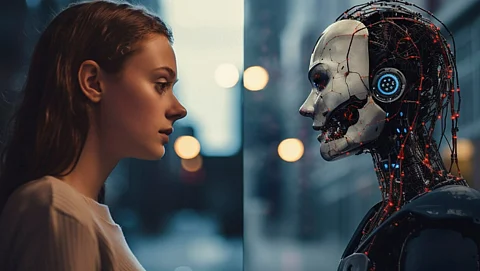

Experts in the field have called for additional rigorous testing to ensure that AI tools can make reliable health decisions. Dr. John Mafi, an associate professor of medicine and primary care physician at UCLA Health, emphasized the need for controlled trials before deploying chatbots for critical health interventions. Both Dr. Mafi and Dr. Ramaswamy have observed that many patients are increasingly turning to AI for medical inquiries due to its accessibility and the absence of limitations on the number of questions that can be posed.

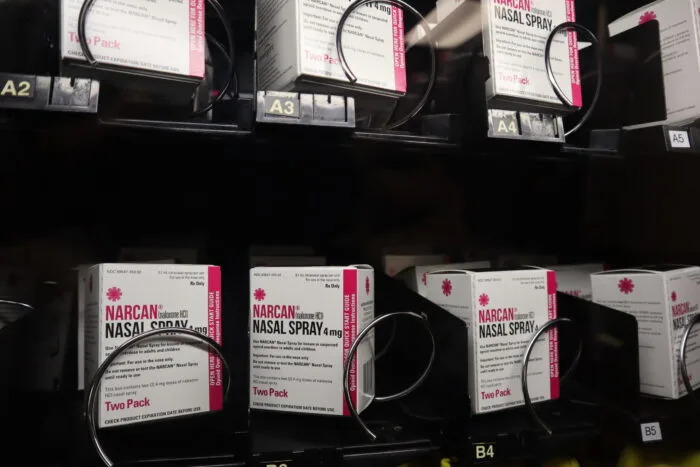

The study revealed that many health-related inquiries via ChatGPT occur outside regular medical hours, with over half a million messages weekly coming from individuals living more than 30 minutes away from a hospital. Dr. Ramaswamy noted the constraints of traditional medical consultations, where physicians cannot address every question within the limited time available during appointments.

Despite the potential benefits of AI in healthcare, Dr. Ramaswamy cautioned against relying solely on AI in emergency situations. He suggested that using AI in conjunction with professional medical advice can help mitigate risks. The collaboration between technology and healthcare sectors is essential for developing safer AI products.

The study underscores the urgent need for further evaluation of AI systems in healthcare settings to ensure they can provide safe and effective support. As Monica Agrawal, an assistant professor of biostatistics and bioinformatics at Duke University, noted, there are significant disparities between AI performance on medical examinations and actual clinical practice. She pointed out that users often provide ambiguous information, which can lead to biased or misleading responses.

While AI tools can enhance patient care, experts agree that they are not substitutes for professional medical advice. Dr. Ethan Goh, executive director of ARISE, an AI research network, reiterated that AI can provide valuable support, but it is crucial to understand its limitations.

Looking forward, the integration of AI into healthcare systems presents both opportunities and challenges. As these technologies continue to evolve, ensuring their reliability and safety will be paramount in creating effective patient-AI-doctor relationships, particularly in underserved areas.