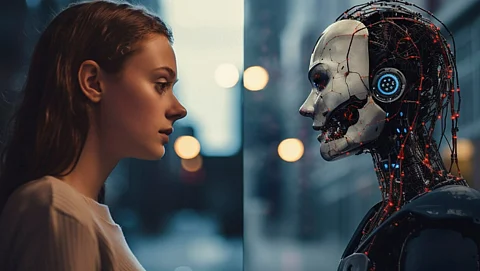

ChatGPT Health, an artificial intelligence tool that offers health guidance to the public, may not effectively direct users to emergency care in critical situations. This conclusion stems from a recent study conducted by researchers at the Icahn School of Medicine at Mount Sinai. The findings, published in the online issue of Nature Medicine on February 23, 2026, represent the first independent evaluation of the AI tool since its launch in January 2026.

The study revealed that ChatGPT Health potentially misguides users in serious health scenarios, particularly regarding the urgency required for seeking medical attention. Researchers highlighted that this misdirection could pose significant risks to individuals relying on the tool for critical health decisions. The evaluation scrutinized the AI’s ability to assess symptoms and provide appropriate recommendations, particularly in emergencies.

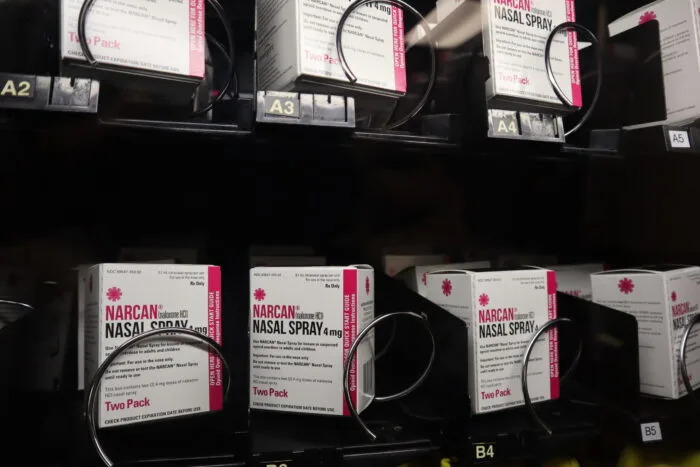

Another major concern raised in the research involves the tool’s suicide-crisis safeguards. The evaluation indicated that the existing measures may be inadequate in effectively identifying users at risk of self-harm. This raises alarm bells about the reliability of AI-driven health guidance, especially when it comes to sensitive topics like mental health and crisis intervention.

The implications of these findings are profound, as more individuals turn to AI tools for health-related advice. The potential for misguidance in urgent situations underscores the need for rigorous safety evaluations and improvements in AI systems. As ChatGPT Health continues to grow in popularity, ensuring its reliability and safety becomes increasingly critical.

In response to the study, experts are calling for enhanced oversight of AI health tools. They emphasize the importance of integrating clinical expertise in the development and evaluation phases to mitigate risks associated with miscommunication. This could involve collaboration between AI developers and healthcare professionals to ensure that the information provided aligns with established medical guidelines.

As the landscape of digital health continues to evolve, the findings from the Icahn School of Medicine serve as a reminder of the complexities and challenges associated with AI in healthcare. The balance between innovation and safety is essential to protect users who depend on these technologies for health guidance.

The evaluation of ChatGPT Health marks a significant step toward understanding the capabilities and limitations of AI in health applications. As researchers continue to explore these technologies, the focus must remain on prioritizing user safety and improving the accuracy of health advice provided by AI systems.