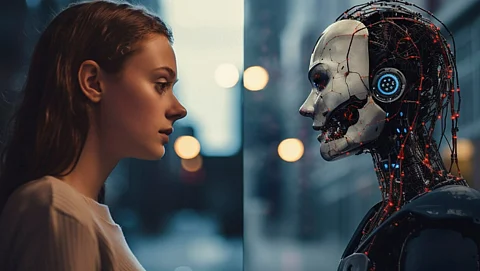

As the use of generative AI (GenAI) tools becomes more common in legal and business environments, courts are increasingly faced with the challenge of determining how traditional legal principles, such as attorney-client privilege and the work-product doctrine, apply to data generated from these technologies. Recent court decisions highlight that while GenAI data may be considered discoverable electronically stored information (ESI), the circumstances surrounding its generation dictate whether it can be protected from disclosure.

One pertinent case is United States v. Heppner, decided on February 17, 2026, in the Southern District of New York. In this case, the defendant used a publicly available GenAI tool to analyze potential legal exposure by entering factual and legal prompts. After sharing the AI-generated analyses with his defense counsel, federal agents seized his computer and the associated ESI during a search of his residence. The government sought to compel the production of this material.

Judge Jed S. Rakoff ruled that the AI-generated content was not protected by attorney-client privilege or the work-product doctrine. The court emphasized three key points: the GenAI platform was a third-party tool with no expectation of confidentiality; the materials were not created at the direction of counsel and thus did not facilitate legal advice; and sharing the AI-generated content with a lawyer afterwards did not retroactively grant it privileged status.

This ruling clarifies that attorney-client privilege specifically applies to communications that are confidential and aimed at facilitating legal advice. It does not extend to documents that, while later useful for legal purposes, were not created under the supervision of an attorney. The decision also raises concerns regarding the risks associated with unsupervised or exploratory use of GenAI tools, particularly when relying on public platforms.

The work-product doctrine, designed to protect materials created by or for counsel in anticipation of litigation, is also under scrutiny in the context of GenAI. Courts are beginning to differentiate between GenAI data produced under the direction of counsel, which may qualify as work product, and that created independently for other purposes, which generally does not qualify for protection.

In another relevant case, Tremblay v. OpenAI, Inc., decided on August 8, 2024, the court ruled on the status of AI-generated prompts and outputs used by plaintiffs alleging copyright infringement. The plaintiffs conducted targeted testing of ChatGPT to evaluate potential claims. While they produced some prompts used in their complaint, they withheld others, claiming they reflected counsel’s mental impressions and litigation strategy.

The court agreed partially with the plaintiffs, determining that unused prompts and related data constituted opinion work product prepared in anticipation of litigation. It also noted that the production of some AI interactions did not waive protection over all related materials. This case illustrates the complexities inherent in determining whether privilege or work-product protection applies to GenAI data, emphasizing the need for careful handling of such information.

As these cases demonstrate, the protection of sensitive data generated by GenAI tools is precarious. The potential for waiving privilege is heightened when confidential information is input into GenAI systems that allow for data retention, reuse, or training. Moving forward, courts evaluating claims of privilege over GenAI data are likely to focus on several factors, including the nature of the AI platform used, contractual or policy-based confidentiality protections, and whether the use of AI was directed or supervised by legal counsel.

To mitigate risks associated with GenAI, legal professionals are advised to utilize closed, enterprise platforms that limit data retention and storage. They should treat GenAI as a supervised assistant, ensuring that prompts and outputs are generated and reviewed under counsel’s direction. It is also essential to avoid including privileged information in prompts and to clearly label any protected materials accordingly, while recognizing that such labels are not conclusive in themselves.

Finally, it is important to address the handling of GenAI data in ESI agreements and to seek Rule 502(d) orders to minimize waiver risks. Privilege logs should meticulously document what GenAI-created data exists, how it was generated, who was involved, and under what confidentiality measures it was created.

As the integration of GenAI into legal practices continues to evolve, the implications for attorney-client privilege and work-product protection remain a critical concern for practitioners navigating this complex landscape.