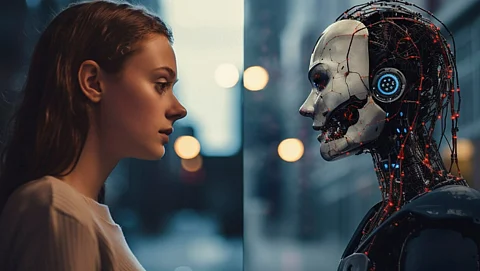

Chips&Media and Visionary.ai have announced a groundbreaking collaboration to develop the world’s first fully AI-based image signal processor (ISP). This innovation aims to replace conventional hardware-based ISPs that have been integral to digital imaging for decades. By leveraging artificial intelligence, the companies intend to shift the entire image formation process into software that runs on neural processing units (NPUs).

The focus of this partnership is on enhancing video processing capabilities in real time, which is particularly beneficial for low-light video scenarios. Traditional ISPs have not significantly evolved in their hardware architecture, often relying on fixed-function components that limit flexibility and scalability. As digital imaging demands grow, especially with the rise of smartphones, autonomous vehicles, and extended reality (XR) devices, both companies recognize the need for a structural transformation in image processing.

Oren Debbi, co-founder and CEO of Visionary.ai, stated, “This is the first full end-to-end ISP pipeline that runs entirely on an NPU, without relying on a hardware ISP at all.” He emphasized that existing pipelines often integrate neural blocks with a fixed-function ISP, whereas their approach completely replaces traditional ISPs with a neural imaging pipeline. This design allows for direct processing of RAW sensor data on NPUs or GPUs, enabling manufacturers to adjust tuning and optimization through over-the-air updates without altering the hardware.

At the heart of this innovative approach is sensor-specific training. Visionary.ai has developed an automated training platform capable of generating a new model in a matter of hours with minimal input—a few short video clips. This rapid integration process significantly reduces the overhead typically associated with classical ISPs, allowing for broader scalability across various sensors and platforms.

AI-enhanced ISPs are already present in many smartphones and cameras, but both Chips&Media and Visionary.ai argue that these systems remain overly reliant on hardware. Current implementations often treat neural networks as isolated components that do not handle the core RAW data processing, which continues to be managed by traditional hardware and mathematical pipelines.

Debbi elaborated, “The image formation pipeline is neural-first, not a classic ISP with a few AI add-ons.” While conventional camera control functions like exposure and white balance still operate in traditional ways, the core image processing pipeline now prioritizes neural methodologies. As AI solutions for these functions mature, Debbi anticipates a shift that will enhance image quality without being constrained by fixed hardware.

The implications for low-light conditions are particularly promising. Traditional ISPs tend to struggle with noise suppression and detail retention, often leading to artifacts such as halos or pixel bleed. As Debbi noted, “You see the biggest difference in the hard cases where classic ISPs have to trade off detail, noise, and artifacts.” The new AI pipeline is designed to deliver cleaner shadows, improved color stability, and fewer temporal artifacts, significantly enhancing video quality in challenging lighting situations.

While the initial focus is on video applications, Debbi acknowledges that still photography could also benefit from this technology. The architecture is inherently suited to processing sequences of images, which is crucial for producing high-quality HDR and low-light images. Most current neural imaging processes occur after the ISP, utilizing YUV or RGB data from which vital sensor information has already been lost. Visionary.ai’s expertise in RAW-domain processing could potentially bridge this gap, either by replacing the ISP or integrating into existing pipelines.

Another advantage of this software-defined AI ISP is its ability to optimize power usage. Debbi explained that the system supports various operating modes, allowing manufacturers to balance power consumption against image quality based on specific applications. “We’re able to run on a very small NPU and consume only slightly more than imaging with a traditional ISP, and that gap continues to shrink,” he said.

The WAVE-N NPU from Chips&Media serves as a high-throughput reference implementation for the AI ISP, demonstrating the capability of an end-to-end neural imaging pipeline in real-time video processing. Because the AI ISP is hardware-agnostic, manufacturers can adapt the software pipeline to different NPUs or GPUs according to their system-on-chip (SoC) architecture, power requirements, and cost constraints.

Despite the transformative potential of this technology, both companies acknowledge that fixed-function ISPs will not vanish immediately. “The center of gravity is clearly moving toward programmable AI compute,” Debbi remarked. For existing chip designs, Visionary.ai can integrate the AI ISP within months, whereas new chips can incorporate more imaging functions to reduce dedicated ISP silicon area.

As the imaging landscape evolves, software updates will become increasingly critical, allowing for faster adaptation to new sensors and use cases. The long-term trajectory suggests that software-defined imaging pipelines will eventually surpass traditional ISPs across various sectors. By unveiling their AI-based ISP at CES 2026, Chips&Media and Visionary.ai aim to position their collaboration as a pioneering step towards a new era in imaging technology, one that promises to redefine image quality delivery and scalability across the industry.