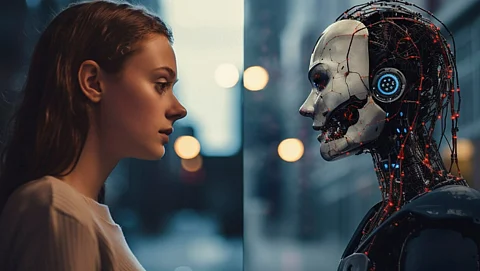

Artificial intelligence (AI) is reshaping the landscape of online content creation, enabling users to generate images, videos, and social media posts almost instantaneously. While these tools enhance productivity, they also pose a significant threat: the proliferation of misinformation. Recent events, particularly related to the ongoing conflict in Iran, demonstrate how AI-generated visuals can mislead the public.

In March 2024, social media platforms experienced a surge in AI-created images purportedly depicting scenes from the Iran war. These visuals, which included explosions, damaged buildings, and soldiers in combat, initially appeared authentic to many viewers. However, the majority of these images were not based on real events but rather produced using AI software. This phenomenon highlights the ease with which misinformation can spread when manipulative content is presented alongside genuine material.

Numerous viral images claiming to illustrate the realities of the Iran conflict have since been debunked. Some posts showed dramatic missile strikes or urban destruction, yet upon investigation, these visuals were confirmed to be artificially generated. In other instances, historical photographs from previous conflicts were altered and circulated as contemporary war imagery. Short video clips sourced from video games were also misrepresented as actual combat footage.

Platforms like X, TikTok, and Telegram facilitated the rapid dissemination of these false narratives. Many users shared the misleading content without verifying its authenticity, allowing it to reach millions before fact-checkers could intervene. This alarming trend reveals how quickly misinformation can travel online, especially when images are designed to appear realistic.

The implications of AI-generated misinformation extend beyond mere confusion. During wartime and crises, false visuals can incite fear and misinform public perceptions. Studies show that people often trust images more than text, leading them to accept intense visual content as fact without scrutinizing the source. This tendency can significantly influence public opinion, further perpetuating the spread of false narratives regarding critical global events.

A concerning consequence of this misinformation surge is the erosion of trust in authentic journalism. As more fabricated images circulate, the public may begin to question the validity of legitimate photographs and articles. Journalists are increasingly challenged to deliver accurate content in an environment where misinformation is rampant, making it difficult to uphold the credibility of their work.

Experts warn that the user-friendliness of AI tools will likely exacerbate the issue of fake online content in the years to come. The ability to easily create convincing visuals means that the landscape of digital misinformation is only expected to grow.

The alarming rise of AI-generated misinformation underscores the imperative for responsible technology use. While the technology itself is not inherently problematic, its misuse raises concerns about the integrity of information shared online. Technology companies are developing advanced systems aimed at identifying and mitigating the spread of false visual content. Governments and researchers are also engaged in discussions to establish regulations governing the safe use of AI.

To combat misinformation effectively, users must exercise caution when sharing content online. Implementing thorough source verification and fact-checking practices can help curb the spread of false information. As AI technology continues to evolve, there is an urgent need to protect the integrity of information and maintain a clear line between fact and fiction in the digital age.