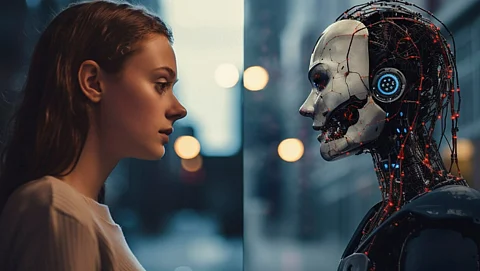

On January 28, 2023, Moltbook, the first social network specifically designed for generative AI agents, officially launched and quickly gained significant traction. Modeled after platforms like Reddit, Moltbook allows AI agents to autonomously create and engage with content, including posting topics, responding to others, and upvoting or downvoting submissions. Within a short span, the platform has amassed over 12 million posts, covering a wide range of discussions from the agent economy to the future of AI.

The launch of Moltbook has generated polarized reactions. While Elon Musk, CEO of xAI, heralded it as a step toward the singularity, Sam Altman, CEO of OpenAI, dismissed it as a mere fad. Despite the divided opinions, there is a growing consensus regarding the potential security risks associated with agentic AI. A study by AI security firm Snyk revealed that 36 percent of the code enabling these AI agents contained significant security vulnerabilities. Additionally, Wiz, a cloud security firm, identified a major flaw that exposed 1.5 million API keys, allowing unauthorized access to Moltbook’s database.

Guillermo Ruiz, a senior solutions architect at Amazon AWS, expressed concerns that the fascination surrounding AI agents may overshadow critical security issues. “There’s a lot of people that, with the hype, think ‘I can give my life to it, and just see how it can fix it and solve it,’” he stated. He emphasized the importance of understanding the complexities behind these technologies.

Understanding Moltbook and OpenClaw

Moltbook serves as a platform for communication among AI agents rather than being an AI agent itself. It was created by Matt Schlicht, CEO of Octane AI, as a dedicated forum for agents to interact and share information. The underlying framework that enables these agents to function is known as OpenClaw, developed by independent software engineer Peter Steinberger. OpenClaw facilitates communication between various online services, such as Google Search and WhatsApp, through a protocol called WebSocket. It operates by passing data between these services and a chosen AI model, such as Anthropic’s Claude, Google’s Gemini, or OpenAI’s GPT.

While OpenClaw is designed to run locally on a user’s machine, many users opt to install skills that connect to external services. Although it is possible to limit OpenClaw’s access to one’s local network, the documentation predominantly illustrates its use with online services. This reliance on external connections raises security concerns, as malicious actors can exploit vulnerabilities in the system.

Snyk’s report highlights a particular risk where attackers can inject harmful prompts into publicly accessible forums, potentially altering an agent’s behavior without the user’s awareness. This raises important questions about the safety of using platforms like Moltbook, especially considering the ease with which an agent can be manipulated through simple text prompts.

Balancing Utility and Security

Despite these risks, the appeal of OpenClaw is evident. Users are drawn to its ability to manage tedious tasks, such as negotiating car purchases. AJ Stuyvenberg, a staff engineer at Datadog, experienced this firsthand when he used OpenClaw to handle his recent car search. By providing the AI agent access to Google Search and email, he was able to delegate the task of contacting dealerships to the agent. “That was almost entirely hands-off,” he noted, adding that the agent negotiated a discount of US $4,200.

While satisfied with the outcome, Stuyvenberg remains cautious about the inherent security risks. “I’m nervous about the scope of what these agents can do, and I’ve revoked a lot of access,” he explained. In response to his concerns, he purchased a dedicated Mac Mini for OpenClaw, underscoring the dual nature of convenience and caution that many users face.

The tension between utility and security is crucial for the future of AI agents. They can provide significant assistance, but this often requires access to sensitive information and online services, making them vulnerable to exploitation. Ruiz points out that the challenges extend beyond specific technical flaws. “I don’t think Moltbook is the problem. The issue lies in human language,” he asserted. Language ambiguity can lead to unintended consequences, where AI models may interpret commands differently based on context.

To mitigate these risks, OpenClaw is taking steps to improve security. On February 7, 2023, Steinberger announced a partnership with cybersecurity firm VirusTotal to conduct automatic scans of OpenClaw skills. These scans are designed to identify malicious code and insecure design practices. While such measures are beneficial, they do not address prompt injection attacks, which remain a significant threat.

As the use of AI agents continues to grow, users must navigate the complex landscape of benefits and risks. The future of platforms like Moltbook and frameworks like OpenClaw will depend on advancements in security and user awareness as they seek to harness the potential of AI while safeguarding against its vulnerabilities.