The relationship between Anthropic, a prominent AI model creator in Silicon Valley, and the U.S. government has reached a critical juncture. On February 27, 2026, President Donald J. Trump ordered all federal agencies to stop using Anthropic’s technology, including its Claude AI models. This decision followed months of failed negotiations regarding a contract that was less than two years old. In a swift follow-up, Secretary of War Pete Hegseth declared Anthropic a “Supply-Chain Risk to National Security,” effectively terminating a $200 million military contract and imposing a strict six-month deadline for the Department of War to eliminate Claude from its systems.

This ban comes despite Anthropic’s recent business successes. The company’s Claude Code service has rapidly grown into a division generating over $2.5 billion in annual recurring revenue (ARR) within its first year. Earlier in February, Anthropic announced a significant $30 billion Series G funding round, raising its valuation to $380 billion. Even as it faces governmental pushback, the company has contributed to productivity gains across various industries, including notable firms like Salesforce and Thompson Reuters, which have benefited from Claude’s capabilities.

Reasons Behind the Pentagon’s Decision

The conflict between the Pentagon and Anthropic is rooted in a fundamental disagreement over the use of AI models. The Department of War sought unrestricted access to Claude for missions deemed legal, while Anthropic’s CEO, Dario Amodei, resisted this demand due to ethical concerns. He opposed the use of its models for mass surveillance of American citizens and fully autonomous lethal weaponry. Hegseth characterized Amodei’s stance as “arrogance and betrayal,” arguing that such restrictions hinder military operations. In contrast, Amodei emphasized that these safeguards are crucial to avoid unintended consequences and mission failures.

As a result of this fallout, the Pentagon has instructed all contractors and partners to cease commercial activities with Anthropic immediately. The department has a 180-day period to transition to alternative providers, which opens the door for competitors to step in.

Implications for Enterprises

For enterprise decision-makers, the ban on Anthropic serves as a crucial reminder about the importance of model interoperability. The current landscape emphasizes flexibility and adaptability in AI solutions. Companies relying solely on a single provider’s API may find themselves unable to respond effectively to shifting demands from governmental clients, like the U.S. military.

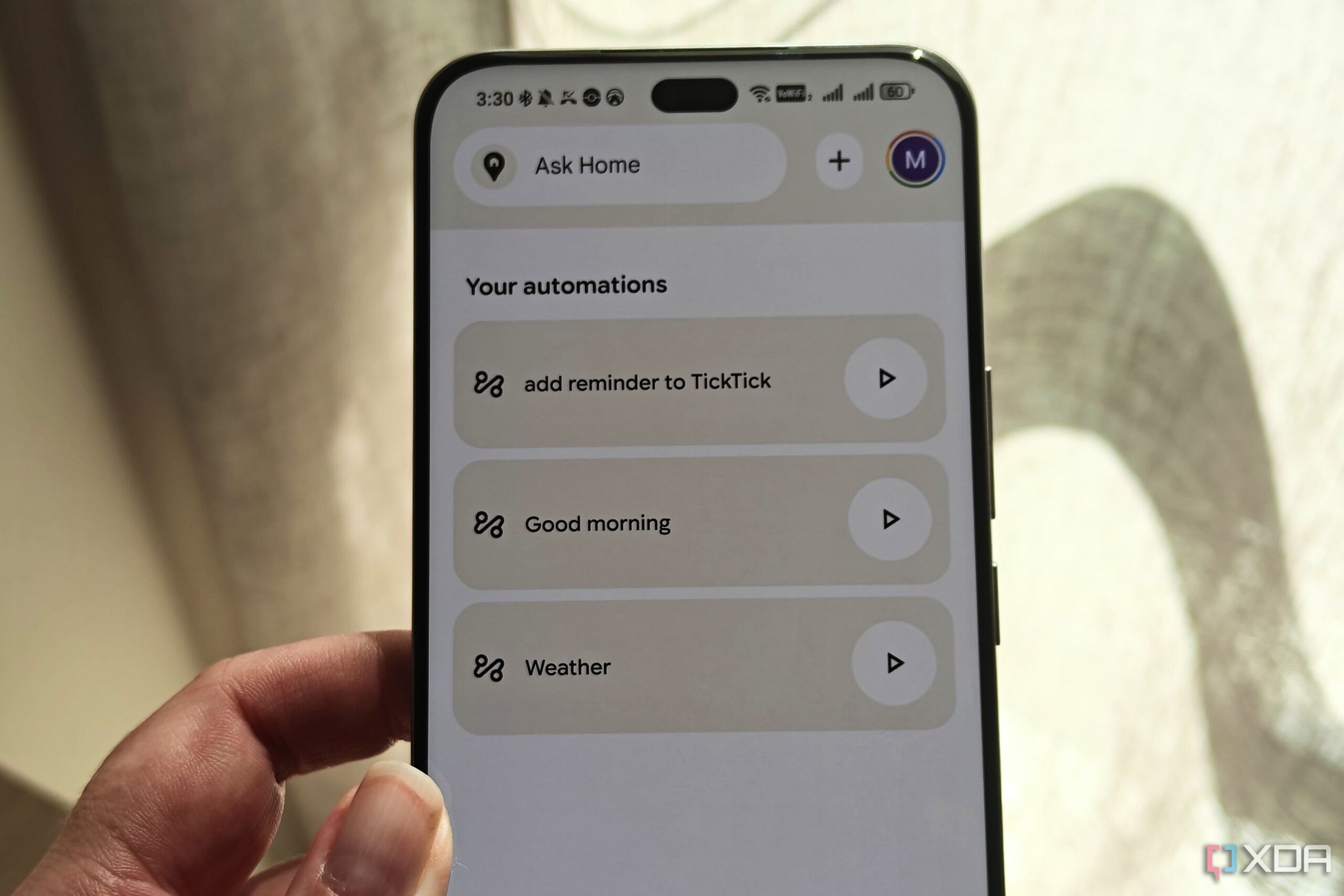

The best strategy now may not be to sever ties with Claude, which remains a leading model for coding and reasoning tasks, but to establish a “warm standby.” This approach involves utilizing orchestration layers and standardized prompting formats, enabling businesses to switch between models like Claude, GPT-4o, and Gemini 1.5 Pro without significant performance loss. Companies that cannot pivot quickly risk having a brittle supply chain.

As competition intensifies, other AI providers are positioning themselves to capture the Pentagon’s attention. OpenAI has announced a new deal with the Department of War, which includes safety principles that remain under scrutiny. Additionally, Elon Musk’s xAI has reportedly agreed to terms with the Pentagon that Anthropic rejected.

While the market shifts, some enterprises are exploring alternatives, including lower-cost, open-source models. Companies like Airbnb are already pivoting to options such as Alibaba’s Qwen for certain customer service roles, citing cost efficiency and flexibility. Although these models may come with their own geopolitical risks, they provide a hedge against the volatility of the U.S. market.

For many businesses, adopting in-house hosting solutions, such as OpenAI’s GPT-OSS series or IBM’s Granite, may serve as a safeguard against sudden regulatory changes. By running models locally or in private clouds and fine-tuning them with proprietary data, enterprises can protect themselves from abrupt shifts in terms of service or federal mandates.

The recent developments underscore the need for comprehensive due diligence. Companies planning to engage with federal agencies must ensure their products do not rely on a single prohibited model provider. The ongoing tension between government regulations and private sector innovation highlights the necessity for strategic redundancy in AI applications.

In the evolving landscape of artificial intelligence, enterprises must prioritize diversification and flexibility. The ability to quickly adapt to changes in vendor relationships and regulatory environments will be critical for maintaining operational resilience. As the focus shifts toward model interoperability, businesses should prepare to navigate the complexities of the current AI ecosystem.